Website | Patreon | Instagram | Youtube | Behance | Twitter | BuyMeACoffee

"Creativity is the fragrance of individual freedom." - Osho

Hello friends!

I know that I generally release on Saturday for the week, but we had family and work, which led to not having as much time this weekend to finish editing the video in time for the next release.

And it is here! My conversation with Dave Yarwood is live on Youtube. The conversation led us down to some fascinating discussions on generative music. I’d recommend specifically the talk on using sound as a way to sense a dataset. Dave had done this for demo at Strange Loop in 2019, which you can find here.

Dave Yarwood Conversation on Generative Music

Dave Yarwood - Dave is a software engineer and musician who has developed a musical composition framework called Alda. In the hour we spoke of his work on Alda, generative music, sonic visualization, inspirations, and influence in the world of generative music. Below you will find all of the high-level topics upon which we spoke.

Just in case you don’t know where to start:

SuperCollider [Multi-platform] - Platform for audio synthesis and algorithmic composition.

ChucK - Strongly-timed, concurrent, and on-the-fly music programming language.

TidalCycles - Domain-specific language for live coding of a pattern.

Sonic Pi - The live coding music synth for everyone.

Csound - A sound and music computing system.

Orca - Live coding environment to quickly create procedural sequencers.

Handel - A small procedural programming language for writing songs in the browser.

Max - A fantastic Node-based programming made for experimentation and invention

Alda - a text-based programming language for music composition.

I hope you have another wonderful week, and we should be caught back up this weekend with another edition of the newsletter.

I hope you have an excellent rest of the week!

Chris

Inspirations

Here I have a few inspirations from the last year or so on Instagram that I have either followed for quite a while; or just started following recently. Make sure to check the profiles below to hear some of the sounds they have generated using systems.

IG: neel.shivdasani / Pinkfluffybunniesnoise / Simone Ghetti / 0.0.0_music

📸 Generative Graphics

GANGogh: Creating Art with GANs

Generative Adversarial Networks (GANS) were introduced by Ian Goodfellow et. al. in a 2014 paper. GANs address the lack of relative success of deep generative models compared to deep discriminative models. The authors cite the intractable nature of the maximum likelihood estimation that is necessary for most generative models as the reason for this discrepancy. GANs are thus set up to utilize the strength of deep discriminative models to bypass the necessity of maximum likelihood estimation and therefore avoid the main failings of traditional generative models. This is done by using both a generative and a discriminative model that compete with each other to train the generator. In essence, “The generative model can be thought of as analogous to a team of counterfeiters, trying to produce fake currency and use it without detection, while the discriminative model is analogous to the police, trying to detect the counterfeit currency. Competition in this game drives both teams to improve their methods until the counterfeits are indistinguishable from the genuine articles.” In more concrete terms:

🏛️ Exhibits / Installations

Live Coding an Ambient Electro-Set

The above video does a great job and taking a sonic library and generate an electro set. It’s good to study/flow work music. It also does an excellent job at also what can be done with the work.

DOMMUNE Tokyo

Watch a series of live coders from Yorkshire in the UK and Tokyo battle it out in back to back live coding sessions. Expect improvised beats, melodies, and noise in a variety of genres, all executed through live coding in various systems. It's all improvised live music, not DJing, so no one will have ever heard any of this music before, not even the performers. It's guaranteed to be surprising and exciting!

🚤 Motion

🔖 Articles and Tutorials

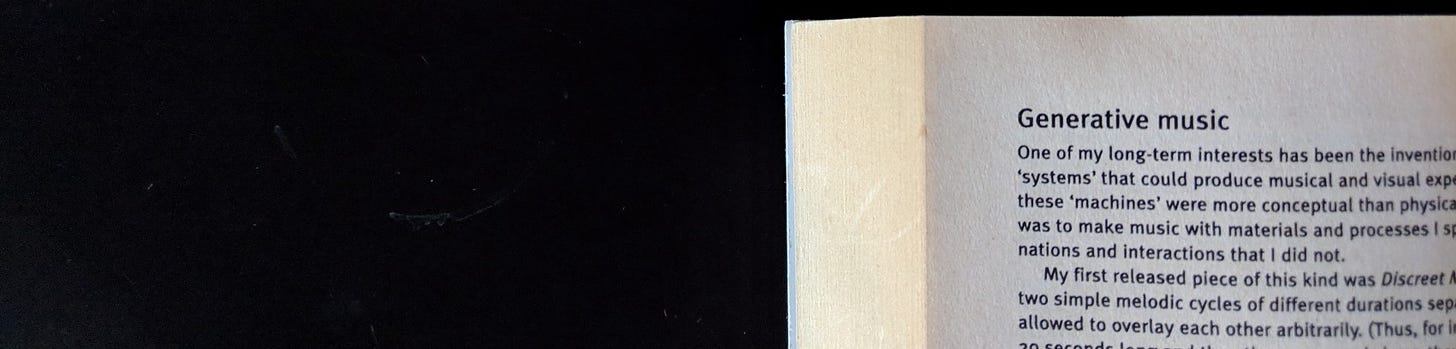

Introduction to Generative Music

“One of my long-term interests has been the invention of ‘machines’ and ‘systems’ that could produce musical and visual experiences… [T]he point of them was to make music with materials and processes I specified, but in combinations and interactions that I did not.” — From “Generative Music” in A Year With Swollen Appendices

Speaking of generative music, here is an exciting analog "generative music" with VCV Rack.

Introduction to Generative Music w/ VCV Rack

So I had a few questions on my last stream about how to make this kind of generative stuff in VCV Rack, so here we go! Hope you enjoy!

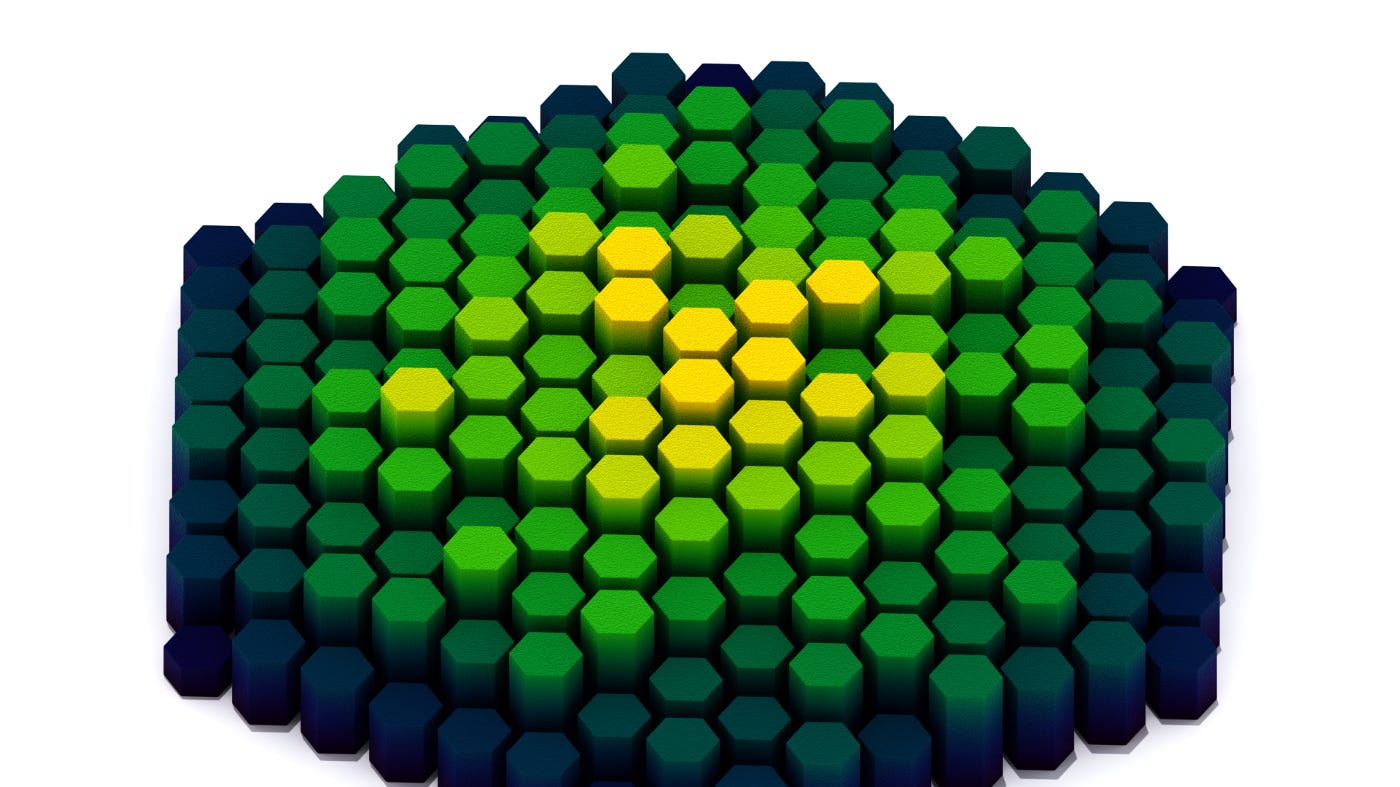

Scripting a Hexagon Grid in Blender

First, let’s review some geometry. Suppose we position the hexagon in a Cartesian coordinate system at the origin, (0.0, 0.0), where the positive x axis, (1.0, 0.0, 0.0), constitutes zero degrees of rotation. Positive on the y axis is forward, (0.0, 1.0, 0.0), and positive on the z axis is up, (0.0, 0.0, 1.0), which means that increasing the angle of rotation will give us counter-clockwise (CCW) rotation.

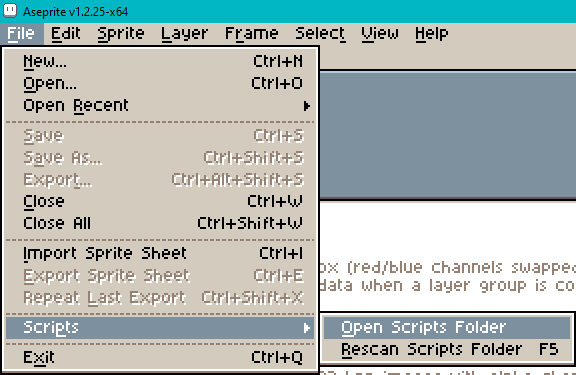

How to Script Aseprite Tools In Lua

Is tutorial introduces how to script an add-on for the pixel art editing program Aseprite. We do a series of “hello world” exercises to create dialog menus in the interface. We then look at two more in-depth tools: an object-oriented isometric grid and a conic gradient.

Unlike previous tutorials, the software discussed here is not free. As of writing, Aseprite costs $19.99. A free trial is available from their website. Free variants, such as LibreSprite, exist due to an earlier, more permissive license. However, Aseprite’s scripting API is a recent addition and so may not exist in these variants. As always, conduct comparative research before purchase. See, for example, this video by Brandon James Greer.

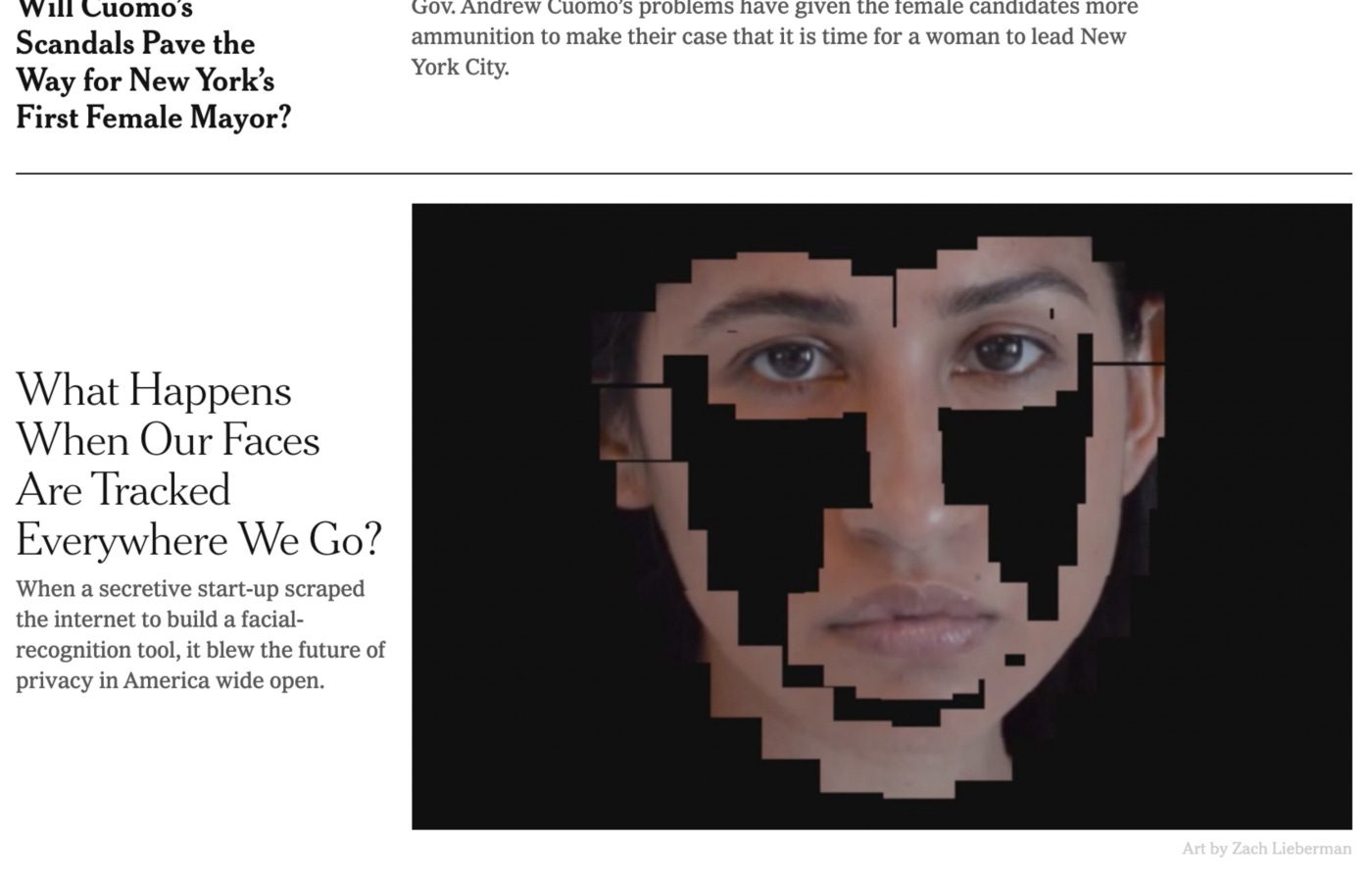

Coded Illustrations

One of the more exciting calls I get as an artist working in the field of new media is for editorial illustration work. It’s rare. I most do generative design as a commercial practice. I don’t have a background in illustration or even a portfolio of this kind of work, so I am always excited when an art director looks at my mostly abstract and gestural animations and inquires about a possible connection. I really enjoy brainstorming and thinking about what images or animation would help bring an article to life.

Writing a small software renderer

In computer graphics there exist many rendering algorithms, i.e. methods to display a virtual scene onto a screen, with various approaches and refinements. But only two main classes of those are currently used at a large scale. After starting to study in the field, I thought about trying to implement both to better grasp how they worked under the hood. This took a bit of time, as I needed to improve my comprehension of the subject and acquire enough background.